In the world of data engineering, data is our raw material. To build robust, efficient, and scalable data systems, we must have a deep understanding of this raw material in all its forms. This chapter is dedicated to the fundamental concepts of data that every data engineer must master. We will move beyond a superficial understanding and dive deep into the nuances of how data is structured, formatted, and modeled. We will explore the trade-offs between different data formats, the principles of effective data modeling, and the critical importance of data quality and governance. A solid grasp of these core concepts is not just academic; it is the foundation upon which all practical data engineering work is built. Without it, you will be like a chef who does not understand their ingredients.

2.1 The Spectrum of Data: From Structured to Unstructured¶

Data is not a monolith. It exists on a spectrum of structure, from the highly organized and predictable to the completely chaotic and unstructured. As a data engineer, you will be expected to work with data from all points on this spectrum. Understanding the characteristics, challenges, and opportunities of each type of data is the first step in designing effective data pipelines.

Structured Data: The World of Rows and Columns¶

What is it?

Structured data is the most traditional and well-understood form of data. It is highly organized and conforms to a rigid, predefined schema. Think of a table in a relational database, an Excel spreadsheet, or a CSV file. In structured data, we have clear, consistent columns (attributes) and rows (records). Each column has a specific data type (e.g., integer, string, date), and every row must adhere to this structure. The schema acts as a contract, guaranteeing that the data will be in a predictable format.

Examples:

A

customerstable in a PostgreSQL database with columns forcustomer_id,first_name,email, andregistration_date.A CSV file of sales transactions with columns for

order_id,product_id,quantity, andprice.An Excel spreadsheet of employee information with columns for

employee_id,department, andsalary.

Advantages:

Ease of Use: Structured data is easy to query and analyze using standard tools like SQL. The predictable structure makes it simple to write queries that filter, join, and aggregate the data.

Performance: Because the structure is known in advance, database systems can be highly optimized for storing and retrieving structured data.

Data Integrity: The rigid schema allows for the enforcement of data integrity rules, such as primary keys, foreign keys, and data type constraints, which helps to ensure the quality of the data.

Disadvantages:

Inflexibility: The biggest drawback of structured data is its inflexibility. If you need to add a new attribute or change the structure of the data, you often have to perform a schema migration, which can be a complex and time-consuming process. This makes it difficult to adapt to rapidly changing business requirements.

Limited Scope: Structured data is not well-suited for all types of data. It is difficult to store things like images, videos, or long-form text in a structured format without losing important context.

Semi-Structured Data: The Best of Both Worlds?¶

What is it?

Semi-structured data is the middle ground between the rigid world of structured data and the chaotic world of unstructured data. It does not conform to a formal data model like a relational database table, but it does contain tags, keys, or other markers that create a self-describing, hierarchical structure. This makes it more flexible than structured data but still provides a degree of organization that is missing in unstructured data.

Examples:

JSON (JavaScript Object Notation): This has become the de facto standard for semi-structured data. A JSON document representing a user might have nested objects for their address and a list of their social media profiles. Some users might have a phone number, while others might not. The structure can vary from document to document.

{ "userId": 123, "username": "johndoe", "email": "john.doe@example.com", "address": { "street": "123 Main St", "city": "Anytown" }, "interests": ["data engineering", "skiing", "chess"] }XML (eXtensible Markup Language): Another popular format for semi-structured data, often used in enterprise systems and configuration files. It uses tags to define elements and attributes.

<user> <userId>123</userId> <username>johndoe</username> <email>john.doe@example.com</email> <address> <street>123 Main St</street> <city>Anytown</city> </address> <interests> <interest>data engineering</interest> <interest>skiing</interest> <interest>chess</interest> </interests> </user>Log Files: Many application and server log files are semi-structured, with each line containing a timestamp, a log level, and a message that might be in a free-text format.

Advantages:

Flexibility: Semi-structured data is highly flexible. You can easily add new fields or change the structure without having to perform a schema migration. This makes it ideal for agile development environments and for handling data from sources with evolving schemas.

Hierarchical Data: It is naturally suited for representing hierarchical or nested data, which is difficult to model in a flat relational table.

Disadvantages:

Query Complexity: Querying semi-structured data can be more complex than querying structured data. While many modern databases provide powerful tools for querying JSON and XML, the queries can be more verbose and less intuitive than SQL on a relational table.

Performance Overhead: The flexibility of semi-structured data comes at a cost. The storage and query performance can be less efficient than with structured data because the database has to parse the structure of each document at read time.

Unstructured Data: The Wild West¶

What is it?

Unstructured data is data that has no predefined data model or is not organized in a predefined manner. It is the vast, messy, and chaotic majority of the world’s data. It is estimated that over 80% of all enterprise data is unstructured. This data is often qualitative rather than quantitative and does not fit neatly into rows and columns.

Examples:

Text: Emails, customer support tickets, social media posts, news articles, legal documents, and books.

Rich Media: Images, videos, and audio files.

Sensor Data: Raw data streams from IoT devices, which might be in a proprietary binary format.

Advantages:

Richness of Information: Unstructured data contains a wealth of information that is often lost in the process of structuring it. The sentiment of a customer review, the objects in an image, or the tone of a customer support call are all valuable pieces of information that are difficult to capture in a structured format.

Flexibility of Collection: You can collect and store unstructured data without having to first define a schema, which makes it easy to capture data from a wide variety of sources.

Disadvantages:

Difficulty of Processing: The biggest challenge with unstructured data is that it is very difficult to process and analyze using traditional tools. It requires advanced techniques from fields like Natural Language Processing (NLP), computer vision, and speech recognition to extract meaningful information.

Storage and Management: Storing and managing large volumes of unstructured data can be challenging. It often requires specialized storage systems like data lakes and object storage.

As a data engineer, your job is to build pipelines that can handle this entire spectrum of data. You might build a pipeline that extracts structured data from a relational database, combines it with semi-structured log data from a web server, and uses a machine learning model to extract sentiment from unstructured customer reviews. The ability to work with all three types of data is a key skill for the modern data engineer.

2.2 Data Formats: The Language of Data Storage¶

Once you understand the structure of your data, the next step is to decide how to store it on disk. The choice of data format is one of the most important decisions a data engineer can make. It has a profound impact on storage costs, query performance, and compatibility with other tools in the ecosystem. A poor choice of format can lead to slow pipelines and high cloud bills, while a good choice can make your systems fast, efficient, and cost-effective. In this section, we will take a deep dive into the most common data formats, exploring their internal structure, their strengths and weaknesses, and when to use them.

Row-Based vs. Columnar Formats: A Fundamental Divide¶

Before we look at specific formats, we need to understand a fundamental distinction in how data can be laid out on disk: row-based vs. columnar.

Row-Based Formats

In a row-based format, all the data for a single row is stored together on disk. This is the traditional way of storing data, used by most relational databases and simple formats like CSV.

How it works: Imagine a table of employees. In a row-based format, the data for the first employee (ID, name, department, salary) is written to disk, followed by the data for the second employee, and so on.

Row 1: [1, "Alice", "Engineering", 80000] Row 2: [2, "Bob", "Marketing", 60000] Row 3: [3, "Charlie", "Engineering", 90000]Best for: Row-based formats are optimized for transactional workloads (OLTP) where you need to read or write entire rows at a time. For example, if you want to retrieve all the information about a specific employee, a row-based format is very efficient because all the data is stored together.

Columnar Formats

In a columnar format, all the data for a single column is stored together on disk.

How it works: In our employee table, all the employee IDs would be stored together, followed by all the names, all the departments, and all the salaries.

Column 1 (ID): [1, 2, 3] Column 2 (Name): ["Alice", "Bob", "Charlie"] Column 3 (Department): ["Engineering", "Marketing", "Engineering"] Column 4 (Salary): [80000, 60000, 90000]Best for: Columnar formats are optimized for analytical workloads (OLAP) where you are running queries that aggregate data from a small number of columns. For example, if you want to calculate the average salary of all employees, a columnar format is extremely efficient because you only need to read the

salarycolumn from disk. You can completely ignore the data for the other columns. This is a huge performance advantage when you have tables with hundreds of columns but your query only needs a few of them.

A Deep Dive into Common Data Formats¶

Now, let’s explore the specific data formats that you will encounter as a data engineer.

CSV (Comma-Separated Values): The Lingua Franca¶

CSV is the simplest and most ubiquitous data format. It is a text-based, row-oriented format where each line is a data record and each record consists of one or more fields, separated by commas.

Strengths:

Human-Readable: You can open a CSV file in any text editor and easily understand its contents.

Universal Support: Virtually every data tool, from Excel to Python to Spark, can read and write CSV files.

Simplicity: It is very easy to create a CSV file. You don’t need any special libraries or tools.

Weaknesses:

No Schema Enforcement: A CSV file has no way of enforcing a schema. You can have rows with different numbers of columns, and there is no way to specify the data type of each column. This can lead to data quality issues and parsing errors.

No Data Type Support: Everything in a CSV file is a string. When you read a CSV file, you have to infer the data types of the columns, which can be error-prone. Is

"123"the string"123"or the integer123?Poor Compression: As a text-based format, CSV does not compress well. This can lead to high storage costs and slow network transfer times for large datasets.

Inefficient for Analytics: Because it is a row-based format, it is very inefficient for analytical queries that only need to access a subset of columns.

When to use it: CSV is a good choice for small datasets, for exporting data to be used by non-technical users in tools like Excel, and for simple data exchange between systems. It should generally be avoided for large-scale data storage and analytics in a data lake or data warehouse.

JSON (JavaScript Object Notation): The Language of the Web¶

JSON is a text-based, semi-structured format that has become the standard for data exchange on the web. It is the language of modern APIs.

Strengths:

Flexible Schema: JSON’s flexible schema makes it ideal for handling data with nested structures and varying attributes.

Human-Readable: Like CSV, JSON is human-readable, which makes it easy to debug.

Wide Support: It is natively supported by almost every programming language and a wide range of databases.

Weaknesses:

Verbose: JSON can be quite verbose, with all the keys and brackets, which can lead to larger file sizes compared to binary formats.

Not Ideal for Analytics: Like CSV, JSON is a row-based format and is not optimized for analytical queries.

No Standard for Large Files: While there is a format called JSON Lines (or NDJSON) where each line is a separate JSON object, there is no single standard for storing massive JSON datasets, which can lead to compatibility issues.

When to use it: JSON is the perfect choice for API responses, configuration files, and for storing semi-structured data in NoSQL document databases like MongoDB. It is also a common format for raw data ingested into a data lake before it is transformed into a more efficient format.

Apache Parquet: The King of Columnar¶

Parquet is a binary, columnar storage format that was designed from the ground up for efficient data storage and analytics. It is the most common data format used in modern data lakes and is a cornerstone of the big data ecosystem.

Strengths:

Columnar Storage: As a columnar format, it is extremely efficient for analytical queries that only access a subset of columns.

Excellent Compression: Parquet uses advanced compression techniques that are applied on a per-column basis. Because all the data in a column is of the same type, it can be compressed very effectively. It is not uncommon to see compression ratios of 10:1 or more compared to uncompressed CSV.

Schema Evolution: Parquet is a self-describing format that stores the schema within the file. It supports schema evolution, which means you can add new columns to a table without having to rewrite all the old data.

Predicate Pushdown: Parquet files are organized into row groups, and for each row group, it stores statistics about the data in each column (e.g., min, max, count). This allows query engines like Spark to perform “predicate pushdown,” where they can skip reading entire row groups if the data in those row groups does not match the filter conditions of the query. This can lead to massive performance gains.

Weaknesses:

Not Human-Readable: As a binary format, you cannot open a Parquet file in a text editor.

Slower for Writes: The complex encoding and compression can make writing Parquet files slower than writing simpler formats like CSV.

When to use it: Parquet is the recommended format for storing large datasets in a data lake for analytical purposes. If you are building a data platform on Spark, Presto, or any other modern query engine, you should be using Parquet.

Apache Avro: The Champion of Schema Evolution¶

Avro is a binary, row-based format that was designed with a strong focus on schema evolution and data serialization. It is a popular choice for streaming data pipelines and for data exchange between services.

Strengths:

Rich Schema Definition: Avro schemas are defined in JSON and are very expressive. They support complex data types, including nested records, arrays, and enums.

Robust Schema Evolution: Avro’s key feature is its robust support for schema evolution. The schema used to write the data is stored with the data, and Avro provides a clear set of rules for how schemas can evolve (e.g., adding or removing fields) while maintaining compatibility between readers and writers. This is incredibly important in a streaming context where different microservices might be producing and consuming data with slightly different versions of the schema.

Compact Format: As a binary format, Avro is much more compact than JSON.

Language Support: Avro has excellent support for code generation in many programming languages. You can take an Avro schema and generate Java or Python classes that make it easy to work with the data.

Weaknesses:

Row-Based: Because it is a row-based format, it is not as efficient for analytical queries as Parquet.

When to use it: Avro is the ideal choice for serializing events in a streaming data pipeline, especially when using a schema registry with Apache Kafka. It is also a good choice for storing the raw, ingested data in a data lake before it is transformed into Parquet for analytics.

Apache ORC (Optimized Row Columnar): The Other Columnar Format¶

ORC is another binary, columnar storage format that originated in the Hadoop ecosystem. It is very similar to Parquet in its goals and features.

Strengths:

Columnar Storage and Compression: Like Parquet, it provides excellent compression and performance for analytical queries.

ACID Transactions: ORC has built-in support for ACID transactions, which is a key feature of data warehouse systems like Apache Hive.

Weaknesses:

Less Widespread Adoption: While ORC is a very capable format, Parquet has seen broader adoption outside of the traditional Hadoop ecosystem.

When to use it: ORC is a great choice if you are working heavily within the Hadoop and Hive ecosystem. For new projects, Parquet is often the more common choice.

Choosing the Right Format: A Practical Guide¶

| Format | Type | Best Use Case | Compression | Schema Evolution | Ecosystem |

|---|---|---|---|---|---|

| CSV | Row-based, Text | Small datasets, human-readable exports | Poor | None | Universal |

| JSON | Row-based, Text | APIs, semi-structured data | Moderate | Flexible | Universal |

| Avro | Row-based, Binary | Streaming data (Kafka), schema evolution | Good | Excellent | Strong in Kafka/Hadoop |

| Parquet | Columnar, Binary | Data lakes, analytical queries (Spark) | Excellent | Good | Strong in Spark/Big Data |

| ORC | Columnar, Binary | Data lakes, analytical queries (Hive) | Excellent | Good | Strong in Hive/Hadoop |

A Common Data Lake Strategy:

A common and effective strategy for a data lake is to use a multi-stage approach with different data formats at each stage:

Bronze Layer (Raw Data): Ingest raw data from source systems and store it in its original format or in a flexible format like Avro. This layer is all about capturing the data as-is, with a focus on schema evolution.

Silver Layer (Cleaned and Conformed Data): Clean, deduplicate, and conform the raw data, and store it in a highly efficient columnar format like Parquet. This layer provides a clean, queryable source of truth for the entire organization.

Gold Layer (Aggregated and Business-Ready Data): Create aggregated data marts and business-level tables from the silver layer, also stored in Parquet. This layer is designed for specific business use cases and is what data analysts and BI tools will query.

By understanding the deep trade-offs between these different data formats, you can design a data platform that is not only functional but also highly performant and cost-effective.

2.3 Data Modeling: The Blueprint for Your Data¶

Data modeling is the process of creating a conceptual representation of data objects, the associations between them, and the rules that govern them. It is one of the most critical skills for a data engineer. A well-designed data model is like a well-designed blueprint for a house: it ensures that the structure is sound, that everything has its proper place, and that it can be easily extended in the future. A poorly designed data model, on the other hand, can lead to a host of problems, including poor query performance, data integrity issues, and difficulty in adapting to new business requirements. In this section, we will explore the key concepts and techniques of data modeling, from the traditional relational world to the modern analytical world.

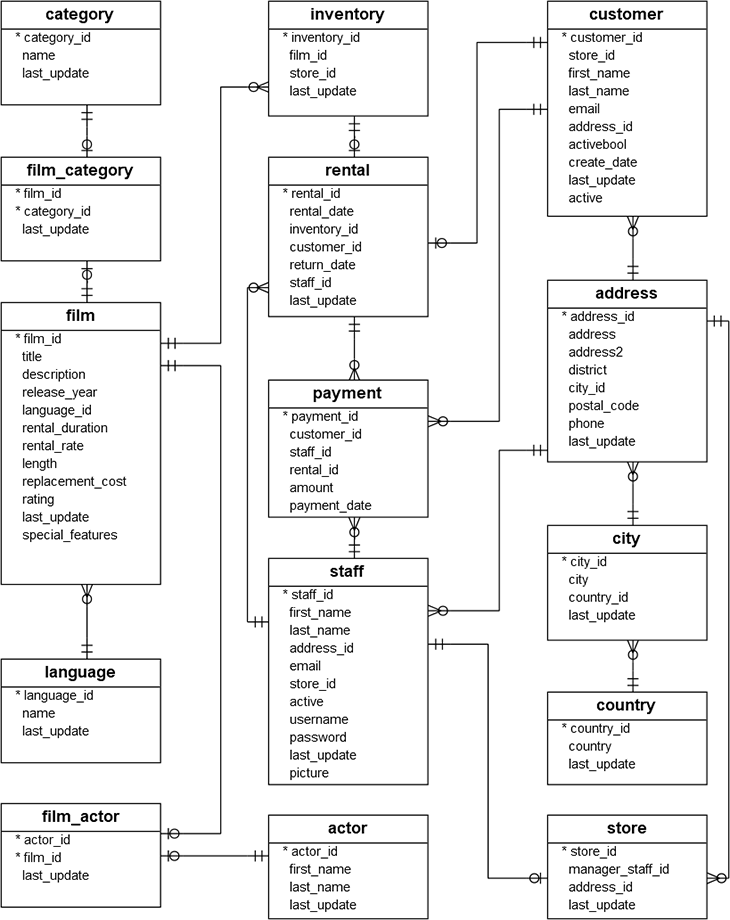

Entity-Relationship (ER) Modeling: The Foundation of Relational Design¶

Entity-Relationship (ER) modeling is the cornerstone of relational database design. It is a top-down approach to database design that starts by identifying the key business entities and the relationships between them. The output of this process is an Entity-Relationship Diagram (ERD), which is a visual representation of the data model.

Key Concepts of ER Modeling:

Entity: An entity is a real-world object or concept that you want to store data about. Examples include

Customer,Product,Order.Attribute: An attribute is a property or characteristic of an entity. For example, the

Customerentity might have attributes likefirstName,lastName, andemail.Relationship: A relationship is an association between two or more entities. For example, a

CustomerplacesanOrder.Cardinality: Cardinality defines the number of instances of one entity that can be associated with the number of instances of another entity. The common cardinalities are:

One-to-One (1:1): Each instance of entity A is associated with exactly one instance of entity B. For example, a

Userhas oneProfile.One-to-Many (1:M): Each instance of entity A can be associated with many instances of entity B, but each instance of entity B is associated with only one instance of entity A. For example, a

Customercan have manyOrders.Many-to-Many (M:N): Each instance of entity A can be associated with many instances of entity B, and each instance of entity B can be associated with many instances of entity A. For example, a

Productcan be in manyOrders, and anOrdercan contain manyProducts. Many-to-many relationships are typically implemented using a junction table (e.g.,order_items).

Figure 1:Figure 2.1. An entity relationship diagram showing the core entities and their relationships.

Normalization: The Art of Reducing Redundancy¶

Normalization is the process of organizing the columns and tables of a relational database to minimize data redundancy. The goal is to ensure that each piece of data is stored in exactly one place. This has several benefits:

Reduces Data Redundancy: Storing the same piece of data in multiple places wastes space and can lead to inconsistencies.

Improves Data Integrity: When data is stored in only one place, it is much easier to keep it accurate and up-to-date. An update to a piece of data only needs to be made in one place.

Avoids Anomalies: Normalization helps to avoid insertion, update, and deletion anomalies. For example, without normalization, you might not be able to add a new customer until they have placed an order, or deleting a customer might also delete all their order history.

Normalization is achieved by following a series of rules called normal forms. The most common normal forms are:

First Normal Form (1NF): A table is in 1NF if all its columns contain atomic (indivisible) values, and each row is unique. For example, you cannot have a

phone_numberscolumn that contains a comma-separated list of phone numbers. You would need to create a separatephone_numberstable.Second Normal Form (2NF): A table is in 2NF if it is in 1NF and all its non-key attributes are fully functionally dependent on the entire primary key. This is mainly relevant for tables with composite primary keys.

Third Normal Form (3NF): A table is in 3NF if it is in 2NF and all its attributes are dependent only on the primary key, not on other non-key attributes. This is about eliminating transitive dependencies. For example, if you have a table with

employee_id,department_name, anddepartment_head, and thedepartment_headis determined by thedepartment_name, you should split this into two tables: one for employees and one for departments.

For most OLTP systems, striving for 3NF is a good goal. It provides a good balance between data integrity and query performance.

Denormalization: Trading Integrity for Speed¶

While normalization is crucial for OLTP systems, it can be detrimental to the performance of analytical queries. A highly normalized schema often requires many joins to retrieve data, which can be slow and complex. Denormalization is the process of deliberately introducing redundancy into a database design to improve query performance. This is a common practice in data warehousing and analytical databases.

For example, in a normalized e-commerce database, to get the name of the category for a product in an order, you might have to join order_items to products to categories. In a denormalized analytical database, you might add the category_name directly to the sales fact table, eliminating the need for a join.

The trade-offs of denormalization:

Improved Query Performance: This is the main reason for denormalization. Fewer joins mean faster queries.

Increased Storage: You are storing redundant data, which takes up more space.

More Complex ETL: The ETL process that populates the denormalized tables becomes more complex, as it is now responsible for maintaining the consistency of the redundant data.

Dimensional Modeling: Designing for Analytics¶

Dimensional modeling is a data modeling technique specifically designed for building data warehouses and analytical databases. It is a highly denormalized approach that organizes data in a way that is intuitive for business users and optimized for analytical queries. The two key components of a dimensional model are facts and dimensions.

Fact Tables: A fact table is the central table in a dimensional model. It contains the quantitative, numerical data (the “facts” or “measures”) that you want to analyze. Examples of facts include

sales_amount,quantity_sold, andprofit. Fact tables also contain foreign keys that link to the dimension tables.Dimension Tables: Dimension tables contain the descriptive, qualitative attributes that provide the context for the facts. They answer the “who, what, where, when, why” questions. Examples of dimensions include

Customer,Product,Date, andStore.

The most common type of dimensional model is the star schema.

The Star Schema

In a star schema, a central fact table is surrounded by its associated dimension tables, creating a structure that looks like a star. The dimension tables are highly denormalized and contain a wide range of attributes.

Advantages of the Star Schema:

Simplicity: The star schema is very simple and easy for business users to understand. The relationships are clear, and it is easy to see how to slice and dice the data.

Query Performance: The denormalized structure means that most queries only require a join between the fact table and a few dimension tables, which is very fast.

BI Tool Compatibility: Most business intelligence (BI) tools are designed to work well with star schemas.

The Snowflake Schema

A snowflake schema is a variation of the star schema where the dimension tables are normalized. For example, the Product dimension might be normalized into Product, Category, and Brand tables. This creates a more complex, snowflake-like structure.

Advantages of the Snowflake Schema:

Reduced Redundancy: By normalizing the dimensions, you reduce data redundancy and save storage space.

Disadvantages of the Snowflake Schema:

Increased Complexity: The snowflake schema is more complex and harder for users to understand.

Slower Query Performance: It requires more joins to answer queries, which can be slower than a star schema.

In modern data warehousing, with the low cost of storage and the power of modern query engines, the star schema is almost always the preferred approach. The simplicity and performance benefits of the star schema usually outweigh the storage savings of the snowflake schema.

Data modeling is a deep and nuanced topic, but by mastering these fundamental concepts of ER modeling, normalization, and dimensional modeling, you will be well-equipped to design data systems that are both robust and performant, for both transactional and analytical workloads.

2.4 Data Storage Paradigms: Choosing the Right Home for Your Data¶

Beyond the logical design of your data model, a data engineer must also make critical decisions about the physical storage of the data. Different business problems require different storage solutions. A system designed to handle millions of small, fast transactions has very different requirements from a system designed to run complex analytical queries over petabytes of historical data. Understanding the different data storage paradigms is essential for building a data platform that is both performant and cost-effective.

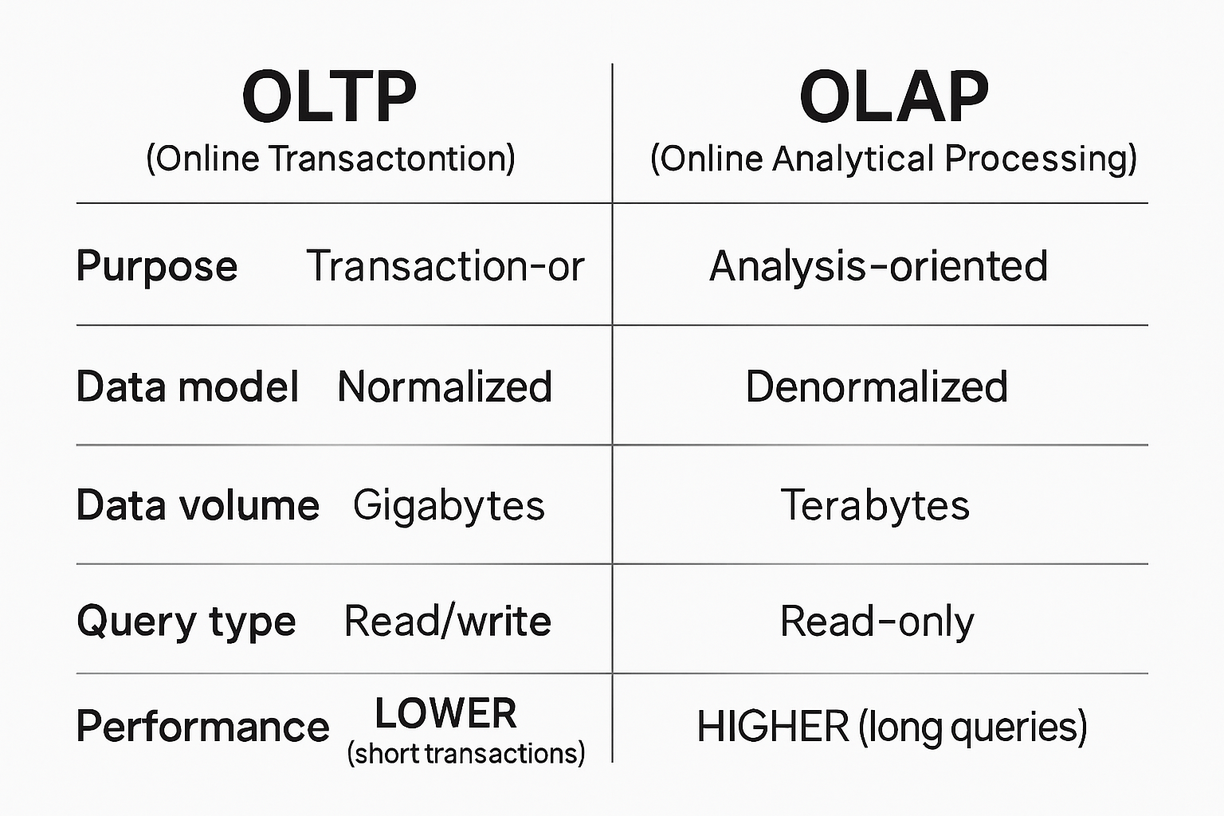

OLTP (Online Transaction Processing): The Engine of Business¶

What is it?

OLTP systems are the workhorses of the digital economy. They are designed to power the day-to-day operational applications that run the business. Think of an e-commerce site processing orders, a banking system handling ATM transactions, or an airline reservation system booking flights. These systems are characterized by a large number of concurrent users performing a high volume of short, simple transactions (inserts, updates, and deletes).

Key Characteristics:

Focus on Transactions: The primary goal is to process transactions quickly and reliably.

ACID Compliance: OLTP systems must be ACID-compliant (Atomicity, Consistency, Isolation, Durability) to guarantee the integrity of the data. Every transaction must be a success or a failure, with no in-between states.

High Concurrency: They must be able to handle thousands of concurrent users and transactions without performance degradation.

Fast Response Times: Queries are typically simple and must return results in milliseconds.

Normalized Data Model: OLTP systems almost always use a highly normalized (typically 3NF) data model to ensure data integrity and avoid redundancy.

Technology Examples: PostgreSQL, MySQL, Oracle, SQL Server.

OLAP (Online Analytical Processing): The Engine of Insight¶

What is it?

While OLTP systems are about running the business, OLAP systems are about understanding the business. They are designed for complex analytical queries over large volumes of historical data. The goal is to support business intelligence, reporting, and data-driven decision-making. Instead of processing individual transactions, OLAP systems are designed to aggregate and analyze data from many different sources.

Key Characteristics:

Focus on Analytics: The primary goal is to enable complex, ad-hoc queries.

Read-Heavy Workloads: OLAP systems are optimized for read-heavy workloads. Data is typically loaded in large batches, and updates are infrequent.

Complex Queries: Queries often involve joins across many tables, complex aggregations, and window functions.

Slower Response Times: Queries can take seconds or even minutes to run, which is acceptable for analytical workloads.

Denormalized Data Model: OLAP systems almost always use a denormalized dimensional model (star or snowflake schema) to optimize query performance.

Technology Examples: Amazon Redshift, Google BigQuery, Snowflake, Apache Druid.

Figure 2:Figure 2.2. A comparison of OLTP and OLAP workloads.

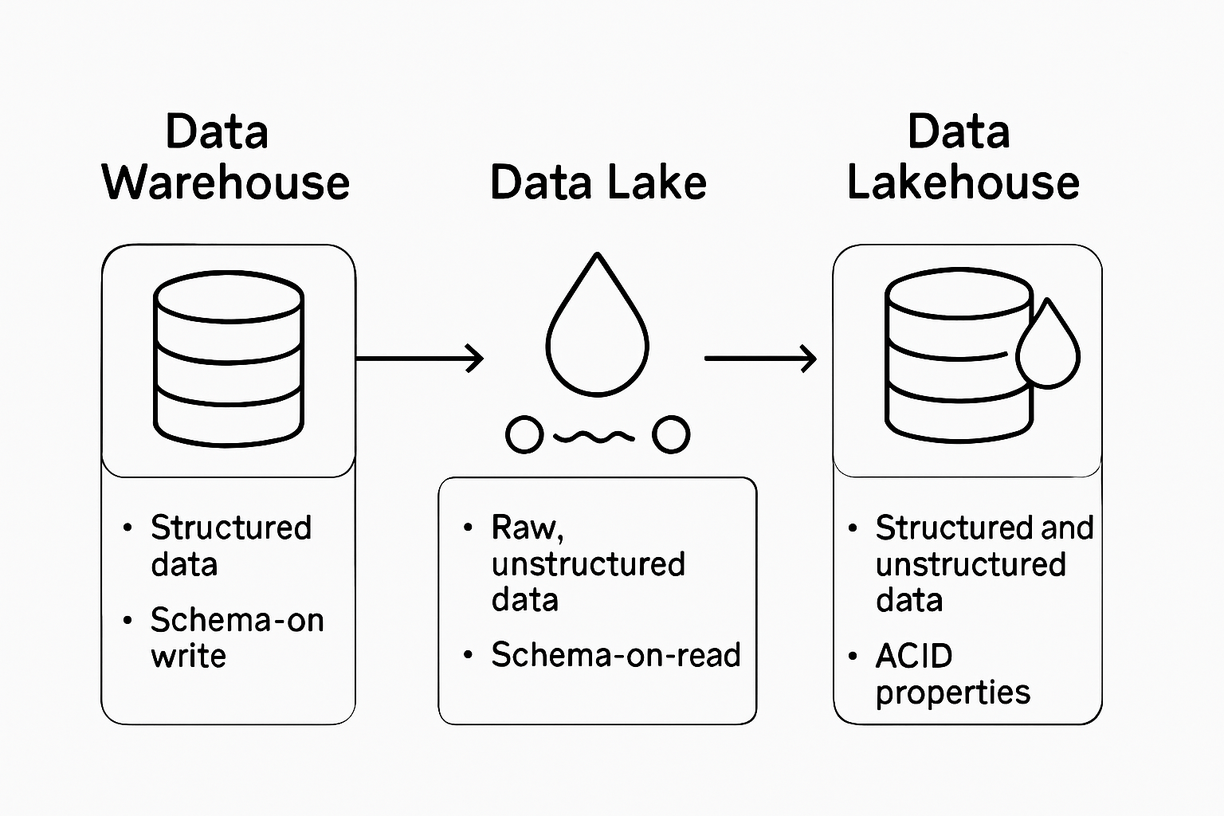

Data Warehouse: The Single Source of Truth for Analytics¶

What is it?

A data warehouse is a centralized repository of integrated data from one or more disparate sources. It is a specialized type of OLAP system that is designed to be the single source of truth for an organization’s analytical data. The data in a data warehouse is cleaned, transformed, and structured for a specific purpose: to make it easy to query and analyze.

Key Characteristics:

Subject-Oriented: Data is organized around key business subjects, such as

Customer,Product, andSales.Integrated: Data is integrated from multiple sources and transformed into a consistent format.

Time-Variant: A data warehouse stores historical data, allowing for trend analysis over time.

Non-Volatile: Data in a data warehouse is typically not updated or deleted. New data is added, but old data is preserved.

Data Lake: The Repository for Raw Data¶

What is it?

A data lake is a centralized repository that allows you to store all your structured, semi-structured, and unstructured data at any scale. Unlike a data warehouse, which requires you to define a schema before you can load data (schema-on-write), a data lake allows you to store data in its raw, native format and apply a schema when you read the data (schema-on-read). This provides immense flexibility.

Key Characteristics:

Stores All Data: A data lake can store any type of data, from relational tables to log files to images and videos.

Schema-on-Read: You don’t have to define a schema upfront. This makes it easy to ingest data from a wide variety of sources without having to first transform it.

Cost-Effective Storage: Data lakes are typically built on top of low-cost object storage, such as Amazon S3 or Alibaba Cloud OSS, which makes it affordable to store massive amounts of data.

Decoupled Compute and Storage: The storage and compute layers are separate, which allows you to scale them independently.

However, the flexibility of data lakes can also be a drawback. Without proper governance and metadata management, a data lake can quickly turn into a “data swamp,” a messy and unusable repository of data that no one understands.

The Lakehouse: The Best of Both Worlds¶

What is it?

The lakehouse is a new, modern data architecture that combines the best features of data lakes and data warehouses. It aims to provide the low-cost, flexible storage of a data lake with the ACID transactions, data governance, and query performance of a data warehouse. This is achieved by adding a transactional metadata layer on top of the data lake.

Key Technologies:

Delta Lake: An open-source storage layer that brings ACID transactions to data lakes.

Apache Iceberg: An open-format table format for huge analytic datasets.

Apache Hudi: A streaming data lake platform that provides upserts and incremental data processing.

Key Characteristics:

ACID Transactions: The lakehouse supports ACID transactions, which means you can perform reliable inserts, updates, and deletes directly on your data lake.

Schema Enforcement and Evolution: It enforces a schema on write, which prevents data quality issues, but it also provides mechanisms for evolving the schema over time.

Time Travel: The transactional log allows you to query previous versions of your data, which is useful for auditing, debugging, and reproducing machine learning experiments.

Unified Batch and Streaming: The lakehouse architecture is designed to handle both batch and streaming data in a unified way.

Figure 3:Figure 2.3. The main paradigms used to store and organize data.

2.5 Data Quality and Governance: The Foundation of Trust¶

Data is only valuable if it is trustworthy. If the business does not trust the data, they will not use it to make decisions, and all the effort spent on building data pipelines will be wasted. Data quality and data governance are the disciplines that ensure the data is accurate, consistent, and used in a compliant and secure manner. For a data engineer, these are not afterthoughts; they are a core part of the job.

The Dimensions of Data Quality¶

Data quality is a multi-faceted concept. It is often defined by a set of key dimensions:

Accuracy: Is the data correct? Does it accurately represent the real-world entity it is describing?

Completeness: Is the data complete? Are there missing values or records?

Consistency: Is the data consistent across different systems and over time?

Timeliness: Is the data available when it is needed? Is it up-to-date?

Uniqueness: Are there duplicate records in the data?

Validity: Does the data conform to the defined schema and business rules?

Implementing a Data Quality Strategy¶

Ensuring data quality is not a one-time fix; it is a continuous process that must be embedded throughout the data engineering lifecycle.

A multi-layered approach:

At the Source: Whenever possible, fix data quality issues at the source. This is the most effective way to prevent bad data from propagating through your systems.

In the Pipeline: Build automated data quality checks and validation rules into your data pipelines. Tools like Great Expectations and dbt tests can be used to define and enforce data quality rules.

Monitoring and Alerting: Set up monitoring and alerting to detect data quality issues as soon as they occur. This allows you to quickly identify and fix problems before they impact downstream users.

Data Quality Dashboards: Create dashboards to track data quality metrics over time. This provides visibility into the health of your data and helps to build trust with your stakeholders.

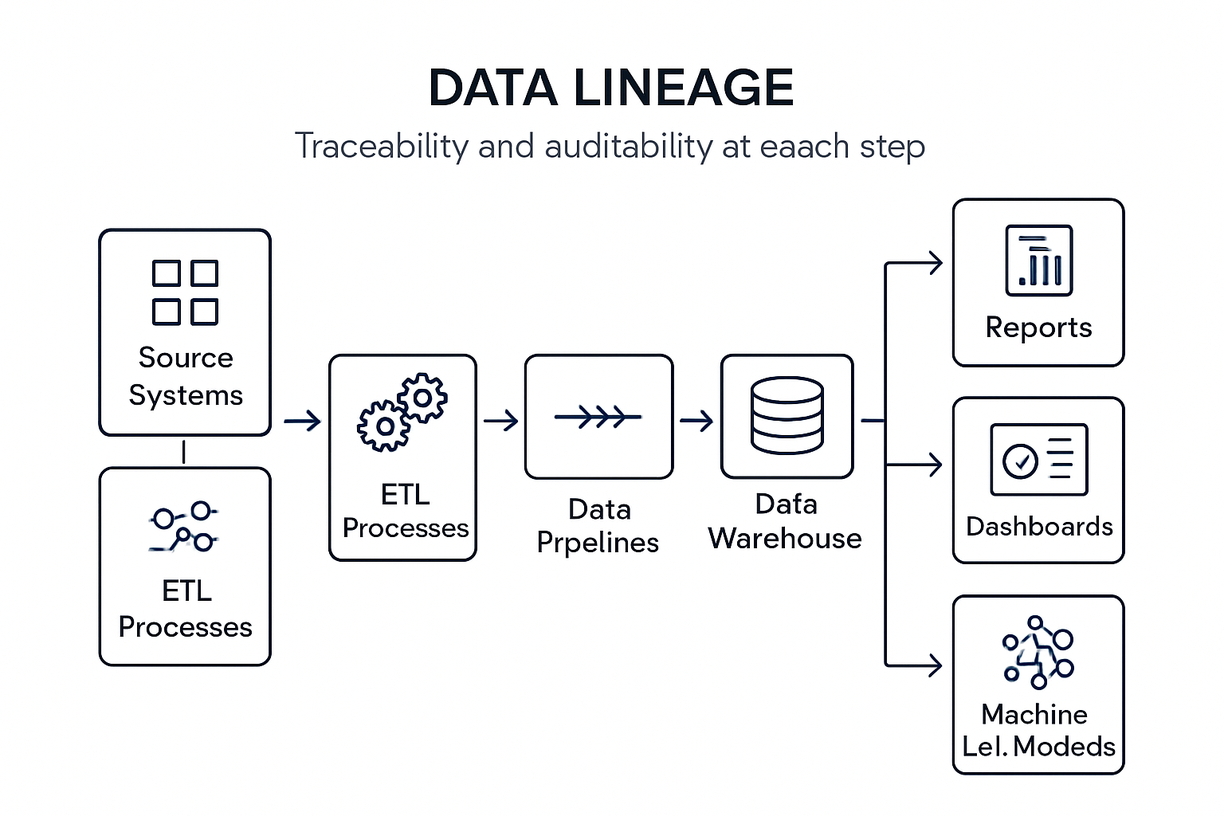

Data Governance: Managing Data as a Strategic Asset¶

Data governance is a broader discipline that encompasses data quality but also includes policies and procedures for how data is managed, accessed, and used. It is about treating data as a strategic asset.

Key pillars of data governance:

Data Stewardship: Assigning ownership and responsibility for data assets to specific individuals or teams.

Data Cataloging and Metadata Management: Creating a centralized catalog of all your data assets, including their schemas, descriptions, and lineage. This makes it easy for users to discover and understand the data that is available.

Data Lineage: Tracking the flow of data from its source to its final destination. This is crucial for impact analysis, root cause analysis, and compliance.

Data Security and Privacy: Implementing controls to ensure that data is accessed and used in a secure and compliant manner. This includes managing access controls, encrypting sensitive data, and complying with regulations like GDPR and CCPA.

Figure 4:Figure 2.4. Data lineage from source systems through downstream transformations and consumers.

Chapter Summary¶

In this chapter, we have taken a comprehensive tour of the core concepts of data that every data engineer must understand. We have explored the spectrum of data from structured to unstructured, and we have dived deep into the trade-offs of different data formats like CSV, JSON, Parquet, and Avro. We have also covered the critical skill of data modeling, learning about ER modeling and normalization for OLTP systems, and dimensional modeling and the star schema for OLAP systems. We have examined the different data storage paradigms, from the traditional data warehouse to the modern lakehouse. Finally, we have discussed the crucial importance of data quality and governance in building trustworthy data systems.

With this strong conceptual foundation, you are now ready to start exploring the specific tools and technologies that you will use to implement these concepts in the real world. In the next chapter, we will begin this practical journey by exploring the vibrant open-source ecosystem that powers modern data engineering.