Figure 1:Figure 1.1. Data is the new oil.

In the 21st century, the phrase “data is the new oil” has become a ubiquitous cliché, but like many clichés, it holds a profound truth. Data, in its raw form, is a crude, unrefined resource. It is a torrent of information flowing from every corner of our digital world: every click on a website, every transaction in a store, every sensor reading from a smart device, every post on social media. This raw data, much like crude oil, is full of potential, but it is not immediately useful. It is messy, inconsistent, and often overwhelming. To unlock its value, it must be discovered, collected, cleaned, processed, and transformed into a reliable, usable, and accessible product. This is the work of data engineering.

Data engineering is the discipline of designing, building, and maintaining the systems and infrastructure that allow an organization to collect, store, process, and analyze data at scale. It is the invisible backbone of the data-driven world, the sophisticated machinery that refines raw data into actionable insights, powers machine learning models, and enables business intelligence. Without data engineering, data science is a theoretical exercise, and business analytics is a guessing game. It is the foundational layer upon which all other data-related disciplines are built.

This book, “Data Engineering in Action,” is designed to be your comprehensive guide to this critical field. We will move beyond the theoretical and dive deep into the practical, hands-on skills you need to succeed as a data engineer. We will explore the tools, technologies, and best practices that are used every day in real-world data engineering, with a strong emphasis on the open-source ecosystem that has become the de facto standard in the industry. We will also use Alibaba Cloud as a practical platform to demonstrate how these open-source tools can be deployed and scaled in a modern cloud environment.

Our goal is to equip you not just with the “what” but with the “why” and the “how.” Why choose a data lake over a data warehouse? How do you build a streaming data pipeline that can handle millions of events per second? Why is data quality not just a checkbox but a continuous process? By the end of this book, you will have the knowledge and the confidence to answer these questions and to build robust, scalable, and reliable data platforms.

1.1 The Rise of the Data-Driven Organization¶

Before we dive into the technical details of data engineering, it is important to understand the business context in which it operates. The last two decades have seen a seismic shift in how organizations operate, moving from intuition-based decision-making to a culture of data-driven insights. A data-driven organization is one that has embedded data and analytics into the core of its business processes and decision-making. This is not just about having a few data analysts in a corner; it is about a fundamental cultural shift where data is treated as a strategic asset.

In a data-driven organization:

Decisions are based on evidence, not just gut feeling. Instead of relying on the highest-paid person’s opinion (HiPPO), decisions are backed by data and analysis.

Data is democratized. Employees across the organization, not just in the data team, have access to the data they need to do their jobs effectively.

Experimentation is the norm. New ideas are tested and validated with A/B tests and other data-driven methods.

Data is a shared language. Different departments can collaborate more effectively because they are all looking at the same source of truth.

The benefits of becoming a data-driven organization are immense. Companies that embrace this culture are more agile, more efficient, and more innovative. They can better understand their customers, optimize their operations, and identify new business opportunities. According to a study by McKinsey, data-driven organizations are 23 times more likely to acquire customers, 6 times as likely to retain customers, and 19 times as likely to be profitable as a result.

However, becoming a data-driven organization is not easy. It requires a significant investment in technology, people, and processes. And at the heart of this transformation is the data engineer. It is the data engineer who builds the reliable, scalable, and accessible data infrastructure that makes this all possible. Without a solid data engineering foundation, the promise of a data-driven organization remains just that—a promise.

1.2 The Anatomy of a Modern Data Team¶

A successful data-driven organization is powered by a team of specialists, each with a distinct but complementary role. While the specific titles and responsibilities can vary between companies, a mature data team typically includes several key players. Understanding these roles, their responsibilities, and how they interact is crucial for an aspiring data engineer. It helps you see where you fit in the bigger picture and how your work enables others.

Figure 2:Figure 1.2. The roles within a modern data team.

The Data Architect: The Master Planner¶

If a data platform were a city, the Data Architect would be the urban planner who designs the layout of the entire city. They don’t lay the bricks for every building, but they create the master blueprint that dictates where the roads, residential areas, industrial zones, and public utilities should go. The Data Architect is a senior, strategic role focused on the high-level design of the organization’s data ecosystem, ensuring it is scalable, secure, and aligned with long-term business goals.

Core Responsibilities:

Enterprise Data Strategy: The Data Architect works with business leaders to understand their objectives and translates them into a comprehensive data strategy. This includes defining how data will be acquired, stored, managed, and consumed across the entire organization.

Technology Selection and Standardization: They are responsible for evaluating and selecting the core technologies that will form the data platform. Should the company use Snowflake, BigQuery, or build its own data warehouse on-premises? Should they use Kafka or Pulsar for streaming? The architect makes these high-stakes decisions and sets the standards for how these tools are used.

Data Modeling and Governance: The architect designs the conceptual and logical data models for the enterprise. They establish the principles of data governance, including data quality standards, metadata management, and data security policies. They create the framework that data engineers and analysts will work within.

Roadmap Development: They create a long-term roadmap for the data platform, planning for future growth, new technologies, and evolving business needs.

A Day in the Life of a Data Architect:

A Data Architect’s day is typically filled with meetings, whiteboarding sessions, and documentation. They might spend the morning meeting with the Head of Marketing to understand the requirements for a new customer analytics platform. The afternoon could be spent evaluating two different data catalog tools, followed by a session with the data engineering team to review their proposed design for a new data pipeline, ensuring it aligns with the overall architecture. They spend less time writing code and more time creating diagrams, writing design documents, and communicating their vision to both technical and non-technical stakeholders.

The Data Engineer: The Builder and Maintainer¶

If the Data Architect is the planner, the Data Engineer is the civil engineer and construction crew who builds the city’s infrastructure. They take the architect’s blueprints and turn them into reality. They build the roads (data pipelines), the water treatment plants (data cleaning and transformation processes), and the power grid (the compute infrastructure). Data Engineers are the hands-on builders who construct and maintain the data pipelines and platforms that the rest of the organization relies on.

Core Responsibilities:

Data Ingestion: Data Engineers build the pipelines that collect data from a wide variety of sources. This can involve writing code to pull data from third-party APIs, setting up Change Data Capture (CDC) to replicate data from production databases, or configuring connectors to ingest data from streaming sources like Kafka.

Data Transformation: This is the heart of data engineering. They build ETL (Extract, Transform, Load) or ELT (Extract, Load, Transform) pipelines to clean, enrich, aggregate, and reshape raw data into a usable format. This involves writing complex SQL queries or using frameworks like Apache Spark or dbt (Data Build Tool).

Data Storage and Warehousing: They are responsible for managing the data storage systems, whether it’s a data lake, a data warehouse, or a modern lakehouse. This includes designing table schemas, optimizing storage for performance and cost, and managing data lifecycle policies.

Infrastructure Management: Data Engineers often manage the underlying infrastructure for the data platform. This can involve using Infrastructure as Code (IaC) tools like Terraform to provision and manage cloud resources, configuring and tuning Spark clusters, or managing a Kubernetes environment for running data applications.

Automation and Orchestration: A key part of their job is to automate everything. They use workflow orchestration tools like Apache Airflow or Dagster to schedule, monitor, and manage their data pipelines, ensuring they run reliably and efficiently.

Data Quality and Monitoring: They are the guardians of data quality. They build automated tests and monitoring systems to ensure that the data is accurate, complete, and fresh. When a pipeline breaks or data quality issues are detected, they are the first responders.

A Day in the Life of a Data Engineer:

A Data Engineer’s day is a mix of development, operations, and collaboration. They might start the day by investigating a failed pipeline run from the previous night. After fixing the bug, they might spend the rest of the morning writing a Python script to ingest data from a new API. In the afternoon, they could have a meeting with a data analyst to understand their requirements for a new data model, followed by a few hours of writing and testing dbt models to transform the raw data. They end the day by deploying their new pipeline to production and monitoring its first run.

The Data Analyst: The Interpreter and Storyteller¶

Once the data infrastructure is built and the data is processed, it’s time to extract value from it. The Data Analyst is the interpreter who translates the clean, structured data into actionable business insights. They are the bridge between the data and the business stakeholders, helping them understand what the data is saying and how to use it to make better decisions.

Core Responsibilities:

Exploratory Data Analysis (EDA): Data Analysts dive into the data to understand its characteristics, identify trends, and spot anomalies. They use their strong SQL skills to query the data warehouse and their statistical knowledge to make sense of what they find.

Reporting and Dashboarding: A core part of their job is to build and maintain reports and dashboards using BI tools like Tableau, Power BI, or Looker. These dashboards provide business users with self-service access to key metrics and performance indicators.

Insight Generation: They go beyond just reporting the numbers. They analyze the data to answer specific business questions, such as “Why did sales decline in the last quarter?” or “Which marketing campaign had the highest ROI?”

Communication and Storytelling: A great data analyst is a great storyteller. They can take a complex analysis and communicate the key findings in a clear, concise, and compelling way to a non-technical audience. They don’t just present charts; they present a narrative that drives action.

A Day in the Life of a Data Analyst:

A Data Analyst’s day is focused on querying, visualizing, and communicating. They might spend the morning working on a complex SQL query to pull data for an ad-hoc request from the marketing team. In the afternoon, they could be building a new dashboard in Tableau to track customer churn. They might end the day by presenting their findings on the effectiveness of a recent product launch to the product management team.

The Data Scientist: The Innovator and Predictor¶

The Data Scientist is the forward-looking member of the data team. While the Data Analyst is often focused on understanding what happened in the past, the Data Scientist is focused on predicting what will happen in the future. They use their expertise in statistics and machine learning to build predictive models and algorithms that can solve complex business problems.

Core Responsibilities:

Machine Learning Model Development: This is the core of their work. They build and train machine learning models to do things like predict customer churn, recommend products, detect fraud, or forecast demand.

Feature Engineering: They work closely with data engineers to create the features (input variables) that are used to train their models. This often involves a deep understanding of the business domain and creative thinking about how to represent data in a way that is meaningful to a model.

Experimentation and A/B Testing: They design and analyze experiments to test new ideas and measure the impact of their models. For example, they might run an A/B test to see if a new recommendation algorithm actually increases sales.

Research and Development: Data Scientists are often responsible for staying up-to-date with the latest research in machine learning and identifying new opportunities to apply these techniques to the business.

A Day in the Life of a Data Scientist:

A Data Scientist’s day is a mix of research, coding, and analysis. They might spend the morning reading a new research paper on natural language processing. In the afternoon, they could be writing Python code in a Jupyter notebook to train a new classification model. They might end the day by analyzing the results of an A/B test and preparing a presentation for the business stakeholders on whether to launch the new model.

Emerging Roles: The Specialists¶

As the field of data matures, new specialized roles are emerging to bridge the gaps between the core roles.

The Analytics Engineer: This role sits at the intersection of data engineering and data analysis. Analytics Engineers are focused on the transformation layer of the data stack. They use their strong SQL and data modeling skills to build clean, reliable, and well-documented data models in the data warehouse, often using tools like dbt. They are less concerned with the infrastructure and ingestion pipelines (the domain of the data engineer) and more focused on creating the perfect data sets for the data analysts to use. They are, in essence, building a data product for the rest of the company.

The Machine Learning Engineer (MLE): This role sits at the intersection of data science and software engineering. While a data scientist is focused on building and experimenting with models, the Machine Learning Engineer is focused on productionizing those models. They build the infrastructure and pipelines to deploy, monitor, and manage machine learning models at scale. This includes building training pipelines, setting up model serving infrastructure, and implementing MLOps (Machine Learning Operations) best practices. They are the ones who take a model from a Jupyter notebook to a production system serving millions of users.

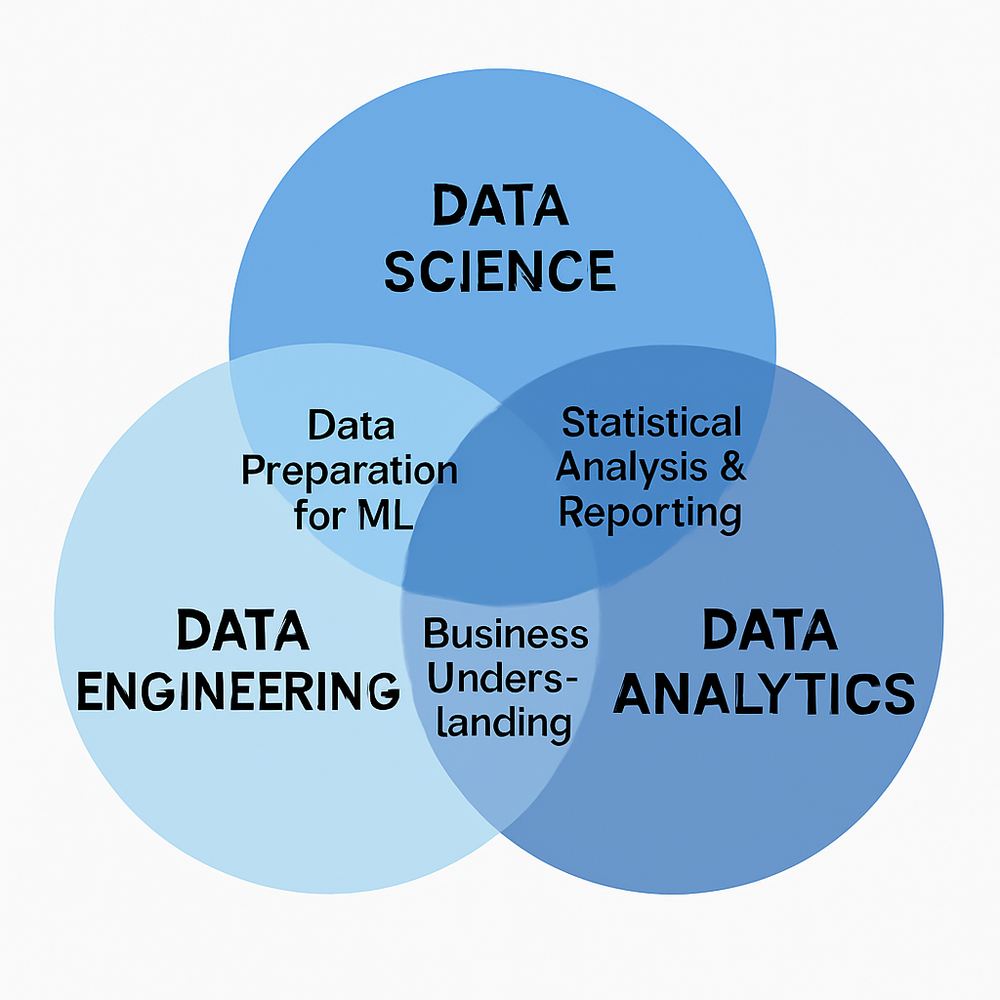

The Venn Diagram of Data Roles¶

The relationship between these roles can be visualized as a set of overlapping circles, each representing a different set of skills and responsibilities.

Figure 3:Figure 1.3. The overlap between data engineering, data science, and data analytics roles.

Data Engineering is the foundation, focused on programming, software engineering, and building the infrastructure.

Data Science is focused on math, statistics, and machine learning.

Data Analytics is focused on business acumen, communication, and storytelling.

No single person can be an expert in all of these areas. A successful data team is one that brings together people with different skills and backgrounds to collaborate effectively. As a data engineer, your primary customers are the data analysts and data scientists on your team. Your job is to empower them by providing them with the clean, reliable, and accessible data they need to do their jobs. When you do your job well, you make everyone else on the team more effective.

1.3 The Modern Data Landscape: A World of Challenges and Opportunities¶

The role of the data engineer has been shaped by the dramatic evolution of the data landscape over the last two decades. We have moved from a world of relatively small, structured datasets stored in on-premises relational databases to a world of massive, complex, and fast-moving data streams generated in the cloud. Understanding the key trends that define this modern landscape is essential for appreciating the challenges that data engineers solve every day.

The Deluge of Big Data: Understanding the Five Vs¶

The term “Big Data” has been a buzzword for years, but it represents a very real and fundamental shift in the nature of data. The concept is often defined by the “Five Vs,” which provide a useful framework for understanding the challenges and characteristics of modern datasets.

1. Volume: The Sheer Scale of Data

This is the most obvious characteristic. We are generating data at an unprecedented scale. A single autonomous vehicle can generate terabytes of data per day. A large e-commerce site can process millions of transactions per hour. A social media platform can generate petabytes of new content daily. This massive volume of data renders traditional data processing tools and techniques obsolete. A process that works on a few gigabytes of data will grind to a halt when faced with a few terabytes. Data engineers must design systems that can scale horizontally, distributing the storage and processing of data across clusters of commodity hardware.

2. Velocity: The Speed of Data

Data is not only getting bigger; it is also getting faster. In the past, data was often processed in batches, perhaps overnight. Today, businesses demand real-time insights. A credit card company needs to detect fraudulent transactions in milliseconds, not hours. A logistics company needs to track its fleet of vehicles in real time. A social media company needs to surface trending topics as they happen. This high velocity of data requires a shift from batch processing to stream processing. Data engineers must build pipelines that can ingest, process, and analyze data as it arrives, enabling immediate action and decision-making.

3. Variety: The Complexity of Data

Data is no longer confined to the neat rows and columns of relational databases. The modern data landscape is a complex mix of structured, semi-structured, and unstructured data. Structured data (like sales records from a database) is still important, but it is now joined by semi-structured data (like JSON logs from web servers) and unstructured data (like text from customer reviews, images from social media, and video from security cameras). This variety of data requires a flexible data platform that can store and process different data types. Data engineers must be proficient in working with a wide range of data formats and tools, from SQL databases to NoSQL databases, from Parquet files to video streams.

4. Veracity: The Quality and Trustworthiness of Data

With the explosion in data sources comes a new challenge: ensuring the quality and trustworthiness of the data. Raw data is often messy, incomplete, and inaccurate. It can be full of typos, missing values, and conflicting information. If you feed garbage into your data pipelines, you will get garbage out. This is the principle of “Garbage In, Garbage Out” (GIGO). Data veracity refers to the accuracy and reliability of the data. Data engineers must build robust data quality checks and validation processes into their pipelines to ensure that the data is clean and trustworthy. Without a focus on veracity, the insights derived from the data will be flawed, and the business decisions based on those insights will be misguided.

5. Value: The Ultimate Goal

This is the most important V. Collecting and storing massive amounts of data is useless if you cannot extract value from it. The ultimate goal of any data initiative is to create business value, whether that is by improving operational efficiency, enhancing the customer experience, or creating new revenue streams. The role of the data engineer is to build the platform that enables the organization to unlock this value. This requires not just technical skills but also a good understanding of the business and what it is trying to achieve.

The Shift to Real-Time: From Batch to Streaming¶

One of the most significant trends in the modern data landscape is the shift from batch processing to real-time or stream processing.

Batch processing is the traditional approach where data is collected and processed in large chunks or batches. A classic example is a nightly ETL job that pulls data from a transactional database, transforms it, and loads it into a data warehouse. This approach is simple and efficient for many use cases, but it has a significant drawback: latency. The insights derived from the data are always out of date, reflecting the state of the world as of the last batch run.

Stream processing, on the other hand, is about processing data as it arrives, typically within milliseconds or seconds. This enables real-time analytics and immediate action. Examples of stream processing use cases include:

Real-time fraud detection: Analyzing credit card transactions as they happen to block fraudulent ones.

Real-time monitoring: Monitoring sensor data from industrial equipment to predict failures before they happen.

Real-time personalization: Updating product recommendations on an e-commerce site based on a user’s real-time browsing behavior.

Building streaming data pipelines is significantly more complex than building batch pipelines. It requires a different set of tools (like Apache Kafka and Apache Flink) and a different way of thinking about data. Data engineers must now be proficient in both batch and stream processing to meet the diverse needs of the business.

The Dominance of the Cloud: A New Paradigm for Data Infrastructure¶

The rise of cloud computing, led by providers like Amazon Web Services (AWS), Google Cloud Platform (GCP), and Alibaba Cloud, has completely transformed how we build and manage data infrastructure. In the past, building a data platform meant buying, racking, and stacking physical servers in a data center. This was a slow, expensive, and inflexible process. The cloud has changed all of that.

Key benefits of the cloud for data engineering:

Elasticity and Scalability: The cloud provides virtually unlimited access to compute and storage resources on demand. You can spin up a thousand-node Spark cluster to run a massive data processing job and then shut it down an hour later, paying only for what you used. This elasticity allows you to build systems that can handle spiky workloads and scale with your data growth.

Managed Services: Cloud providers offer a rich set of managed services for data engineering, such as managed databases (like Amazon RDS), data warehouses (like Google BigQuery), and data processing platforms (like AWS Glue). These services handle the underlying infrastructure management, allowing data engineers to focus on building pipelines and creating value, not on patching servers.

Cost-Effectiveness: The cloud’s pay-as-you-go pricing model can be much more cost-effective than the large upfront investment required for on-premises infrastructure. The separation of compute and storage in modern cloud data warehouses also allows for independent scaling and cost optimization.

Global Reach: Cloud providers have data centers all over the world, making it easy to build globally distributed applications and comply with data residency regulations.

While the cloud offers immense power and flexibility, it also introduces new complexities. Data engineers must now be proficient in cloud technologies and understand how to design and manage secure, cost-effective, and reliable cloud-based data platforms.

The Rise of AI and Machine Learning: A New Demand for Data¶

The recent explosion in artificial intelligence (AI) and machine learning (ML) has created a voracious new appetite for data. Machine learning models, especially deep learning models, require massive amounts of high-quality training data to be effective. This has placed a new set of demands on data engineers.

How data engineering enables AI/ML:

Building Training Data Pipelines: Data engineers build the pipelines that collect, clean, and transform raw data into the labeled training datasets that data scientists use to build their models.

Feature Engineering at Scale: They help data scientists create and manage features for their models, often building and maintaining centralized “feature stores” that allow for the reuse of features across different models.

Building ML Pipelines: They work with machine learning engineers to build the automated pipelines that train, evaluate, and deploy models to production.

Real-time Inference: They build the low-latency data pipelines that feed real-time data to models in production for real-time predictions.

As AI and ML become more integrated into business, the role of the data engineer becomes even more critical. They are the ones who provide the fuel for the AI engine.

In summary, the modern data landscape is a dynamic and exciting world of massive scale, incredible speed, and complex variety. It is a world of immense challenges, but also of incredible opportunities. As a data engineer, you are at the center of this world, building the systems that will power the next generation of data-driven innovation.

1.4 The Data Engineering Lifecycle: From Requirement to Production¶

To build robust and reliable data systems, data engineers follow a structured process known as the data engineering lifecycle. This lifecycle provides a framework for taking a data project from an initial business idea all the way to a production system that delivers continuous value. While the specific steps can vary, the lifecycle generally consists of a series of well-defined phases. Understanding this lifecycle is crucial for managing projects, collaborating with stakeholders, and ensuring that the final product meets the business needs.

Phase 1: Requirement Gathering and Analysis¶

Every data engineering project begins with a business need. This phase is all about understanding that need and translating it into a set of technical requirements. This is a critical phase that requires close collaboration with stakeholders, who could be data analysts, data scientists, business users, or product managers.

Key Activities:

Understanding the Business Problem: What is the business trying to achieve? Are they trying to build a new dashboard, create a new machine learning model, or launch a new data-driven product feature? The data engineer must understand the “why” behind the request.

Identifying Data Sources: Where will the data come from? Is it in a production database, a third-party API, a set of log files, or a streaming platform? The data engineer needs to investigate the availability, accessibility, and quality of the source data.

Defining Success Metrics: How will we know if the project is successful? This involves defining key performance indicators (KPIs) for the data pipeline, such as data freshness, accuracy, and completeness, as well as the business KPIs that the project is intended to impact.

Scoping the Project: What is the scope of the minimum viable product (MVP)? It is important to break down a large project into smaller, manageable phases to deliver value incrementally.

Example: A data analyst wants to build a dashboard to track daily user engagement. In this phase, the data engineer would work with the analyst to understand which specific metrics are needed (e.g., daily active users, session duration, feature adoption), where the raw user event data is stored (e.g., in Kafka), and what the required data freshness is (e.g., the dashboard should be updated every hour).

Phase 2: Design and Architecture¶

Once the requirements are clear, the next step is to design the solution. In this phase, the data engineer creates the technical blueprint for the data pipeline and the overall data platform. This is where key architectural decisions are made that will impact the project for years to come.

Key Activities:

Choosing the Right Tools: Based on the requirements, the data engineer selects the appropriate tools for each part of the pipeline. Which tool should be used for ingestion? Which for transformation? Which for storage? This requires a deep understanding of the available technologies and their trade-offs.

Designing the Data Model: How will the data be structured in the data warehouse or data lake? The data engineer designs the table schemas, paying close attention to data types, partitioning strategies, and optimization for query performance.

Designing the Data Flow: The data engineer creates a diagram that shows how data will flow from the source to the target, including all the intermediate transformation steps. This is often represented as a Directed Acyclic Graph (DAG).

Planning for Scalability, Reliability, and Security: The design must account for future growth, potential failures, and security requirements. How will the system scale if the data volume increases by 10x? What happens if a pipeline fails? How will sensitive data be protected?

Example: For the user engagement dashboard, the data engineer might design a pipeline that uses Apache Flink to read data from Kafka in real time, performs some initial cleaning and aggregation, and then writes the data to a Delta Lake table in the data lake. A separate dbt project will then be used to transform this raw data into a clean, aggregated data model that is optimized for the analyst’s queries.

Phase 3: Development and Implementation¶

This is the phase where the data engineer rolls up their sleeves and starts building. They take the design from the previous phase and turn it into working code and infrastructure.

Key Activities:

Writing Code: This is the core of the development phase. The data engineer writes the code for the data pipelines, which could be in SQL, Python, Scala, or Java, depending on the tools being used.

Infrastructure as Code (IaC): The data engineer uses tools like Terraform or AWS CloudFormation to define and provision the required cloud infrastructure (e.g., databases, storage buckets, compute clusters) in a repeatable and automated way.

Building Transformation Logic: The data engineer writes the SQL queries or Spark jobs that transform the raw data into the desired format.

Implementing Data Quality Checks: The data engineer adds automated data quality tests to the pipeline to ensure that the data is accurate and reliable.

Example: The data engineer writes the Flink job to process the Kafka data, creates the Terraform scripts to provision the necessary AWS resources (like an EMR cluster and an S3 bucket), and writes the dbt models to create the final user engagement data mart.

Phase 4: Testing and Quality Assurance¶

Before a data pipeline can be deployed to production, it must be thoroughly tested to ensure that it is working correctly and that the data it produces is accurate. This is a critical phase that is often overlooked but is essential for building trust in the data.

Key Activities:

Unit Testing: The data engineer writes unit tests for individual components of the pipeline, such as a specific transformation function or a data quality check.

Integration Testing: The data engineer tests the entire pipeline from end to end to ensure that all the components work together correctly.

Data Validation: The data engineer validates that the data produced by the pipeline is correct. This can involve comparing the output data with the source data, checking for nulls and duplicates, and verifying that the business logic has been implemented correctly.

Performance Testing: The data engineer tests the pipeline’s performance under load to ensure that it can handle the expected data volume and meet the required data freshness SLAs.

Example: The data engineer writes unit tests for their dbt models, runs the entire pipeline on a staging environment with a sample of production data, and writes a series of data validation queries to check that the final user engagement metrics are calculated correctly.

Phase 5: Deployment and Productionization¶

Once the pipeline has been tested and validated, it is time to deploy it to production. This phase is about moving the code and infrastructure from the development environment to the live production environment.

Key Activities:

CI/CD (Continuous Integration/Continuous Deployment): The data engineer sets up a CI/CD pipeline to automate the process of building, testing, and deploying the data pipeline. This ensures that changes can be deployed to production quickly and reliably.

Orchestration: The data engineer schedules the pipeline to run automatically using a workflow orchestration tool like Apache Airflow. The DAG is configured with the correct dependencies, retries, and alerting.

Monitoring and Alerting: The data engineer sets up monitoring dashboards and alerts to track the health and performance of the pipeline in production. This includes monitoring for pipeline failures, data quality issues, and performance degradation.

Example: The data engineer creates a Jenkins or GitHub Actions pipeline that automatically runs the tests and deploys the dbt project and the Flink job to production whenever a change is merged to the main branch. They then create an Airflow DAG to run the dbt models every hour and set up alerts in PagerDuty to notify the on-call engineer if the DAG fails.

Phase 6: Operations and Maintenance¶

The data engineering lifecycle does not end after deployment. Production systems require ongoing monitoring, maintenance, and optimization to ensure they continue to run reliably and efficiently. This is often the longest phase of the lifecycle.

Key Activities:

Monitoring: The data engineer keeps a close eye on the production pipelines, responding to alerts and troubleshooting issues as they arise.

Optimization: The data engineer looks for opportunities to improve the performance and reduce the cost of the pipelines. This could involve tuning Spark jobs, optimizing SQL queries, or right-sizing cloud resources.

Maintenance: The data engineer performs regular maintenance tasks, such as upgrading software versions, applying security patches, and archiving old data.

Iteration and Improvement: The data engineering lifecycle is a continuous loop. Based on feedback from users and new business requirements, the data engineer will go back to the requirement gathering phase to start the cycle all over again, adding new features and improving the existing system.

Example: The data engineer notices that the user engagement pipeline is running slower than expected. They analyze the Spark UI and find a performance bottleneck. After optimizing the Spark job, the pipeline runs 30% faster. A few weeks later, the data analyst comes back with a new request to add a new metric to the dashboard, and the lifecycle begins again.

By following this structured lifecycle, data engineers can move beyond ad-hoc scripting and build professional, production-grade data systems that are reliable, scalable, and create lasting value for the organization.

1.5 How This Book Is Organized: A Roadmap for Your Journey¶

This book is structured to take you on a journey through the world of data engineering, from the foundational concepts to advanced, real-world applications. We have organized the content into six logical parts, each building on the previous one. Our goal is to provide a clear, step-by-step roadmap that will guide you from a beginner to a competent and confident data engineer.

Part 1: Foundations of Data Engineering (Chapters 1-3)

This is where your journey begins. In this first part, we will lay the groundwork for everything that follows. We have already started in this chapter by introducing the field of data engineering, the roles within a modern data team, and the key trends shaping the data landscape. In the next two chapters, we will dive deeper into the core concepts of data, including data modeling and storage paradigms, and we will explore the vibrant open-source ecosystem that powers modern data engineering.

Part 2: Data Storage Solutions (Chapters 4-7)

With the foundations in place, we will move on to the first practical challenge: storing data. This part is dedicated to the wide variety of data storage solutions that a data engineer must master. We will start with the workhorses of the industry, relational databases like PostgreSQL and MySQL. We will then explore the world of NoSQL databases, such as MongoDB and Cassandra, and learn when to use them. Finally, we will cover the cornerstones of modern data platforms: object storage, data lakes, data warehouses, and the emerging lakehouse architecture.

Part 3: Data Processing and Orchestration (Chapters 8-9)

Once you know how to store data, the next step is to learn how to process it. This part is all about data transformation and workflow management. We will take a deep dive into the most important data processing frameworks, including the industry-standard Apache Spark. You will learn how to write efficient, scalable data transformation jobs. We will then cover the critical topic of data orchestration, exploring tools like Apache Airflow that allow you to schedule, monitor, and manage complex data pipelines.

Part 4: Data Governance, Security, and Cloud Platforms (Chapters 10-12)

Building data pipelines is one thing; building them in a secure, compliant, and well-governed way is another. In this part, we will cover the crucial non-functional aspects of data engineering. We will discuss data governance, including data quality, data lineage, and metadata management. We will explore data security best practices for protecting sensitive data. We will also take a practical look at how to build and manage data platforms on a major cloud provider, using Alibaba Cloud as our example.

Part 5: Data Engineering for AI and ML (Chapters 13-16)

This part is dedicated to one of the most exciting and fastest-growing areas of data engineering: supporting artificial intelligence and machine learning. We will explore the unique challenges of data engineering for AI/ML and cover the key technologies and patterns. This includes building data pipelines for Retrieval-Augmented Generation (RAG) applications, engineering ML pipelines, building and managing feature stores, and working with vector databases and embeddings.

Part 6: Business Applications and Case Studies (Chapters 17-18)

In the final part, we will bring everything together and see how data engineering is applied in the real world. We will explore a variety of common business use cases for data engineering, from building customer 360 platforms to detecting fraud in real time. We will also walk through several end-to-end case studies, showing how the tools and techniques we have learned throughout the book can be combined to solve complex business problems.

Hands-On Exercises and a GitHub Repository

This is not a book to be read passively. To truly learn data engineering, you must get your hands dirty. That is why every chapter includes practical, hands-on exercises that will allow you to apply what you have learned. All the code and examples from this book are available in a public GitHub repository, which will serve as a valuable resource and portfolio piece for your own career.

Chapter Summary¶

In this chapter, we have embarked on our journey into the world of data engineering. We have defined what data engineering is and why it is the critical foundation for any data-driven organization. We have explored the anatomy of a modern data team, understanding the distinct but complementary roles of the data architect, data engineer, data analyst, and data scientist. We have surveyed the modern data landscape, diving into the challenges and opportunities presented by the Five Vs of Big Data, the shift to real-time streaming, the dominance of the cloud, and the rise of AI and ML. We have also walked through the data engineering lifecycle, a structured process for building production-grade data systems. Finally, we have laid out the roadmap for the rest of this book.

You should now have a clear understanding of what data engineering is, why it is so important, and what a data engineer does. You are ready to move on from the high-level overview and start diving into the core technical concepts that will form the basis of your data engineering skill set. In the next chapter, we will begin this technical journey by exploring the fundamental concepts of data itself.